SimpleDev

Web Dev Person / Ex Performance ECU Calibrations Person

- 8 Posts

- 39 Comments

3·1 year ago

3·1 year agoYep that’s a pretty good comparison!

I’m curious on what you mean by sourcing training data in an ethical way? I know OpenAI has come under well deserved scrutiny for apparently using content that is hidden behind paywalls without purchasing it themselves in their training data. Which is quite unethical, but aside from that instance I’m interested in hearing some other concerns for my own education.

In general there are definitely loads of models on places like Hugging Face that are fully open source and provide training data sources for many.

I believe for Microsoft’s new Phi 3 models they actually generated synthetic data themselves for training as well which is an interesting approach that seems to yield good results.

In the open source LLM world the new Meta Llama 3 models are the latest and greatest, I haven’t seen any cause for concerns with it yet. Might be worth looking into those!

10·1 year ago

10·1 year agoI haven’t personally tried it yet with Ollama but it should work since it looks like Ollama has the ability to use OpenAI Response Formatted API https://github.com/ollama/ollama/blob/main/docs/openai.md

I might give it go here in a bit to test and confirm.

15·1 year ago

15·1 year agoLocal models are indeed already supported! In fact any API (local or otherwise) that uses the OpenAI response format (which is the standard) will work.

So you can use something like LM Studio to host a model locally and connect to it via the local API it spins up.

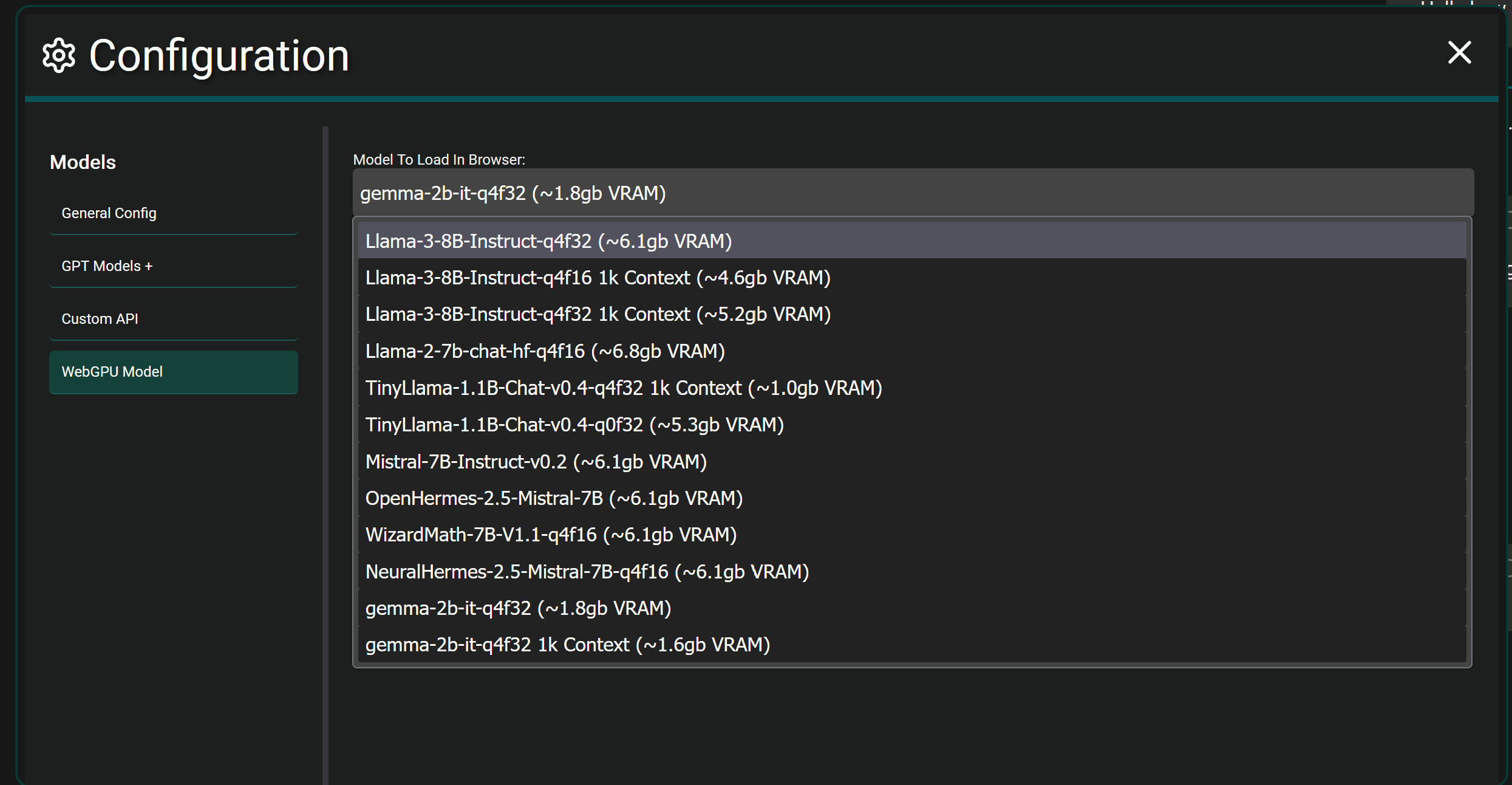

If you want to get crazy…fully local browser models are also supported in Chrome and Edge currently. It will download the selected model fully and load it into the WebGPU of your browser and let you chat. It’s more experimental and takes actual hardware power since you’re fully hosting a model in your browser itself. As seen below.

11·1 year ago

11·1 year agoThis app is more of an interface to use while connecting to any number of LLM Models that have an API available. The application itself has no model.

For example you can choose to use GPT-4 Omni by providing an API key from OpenAI.

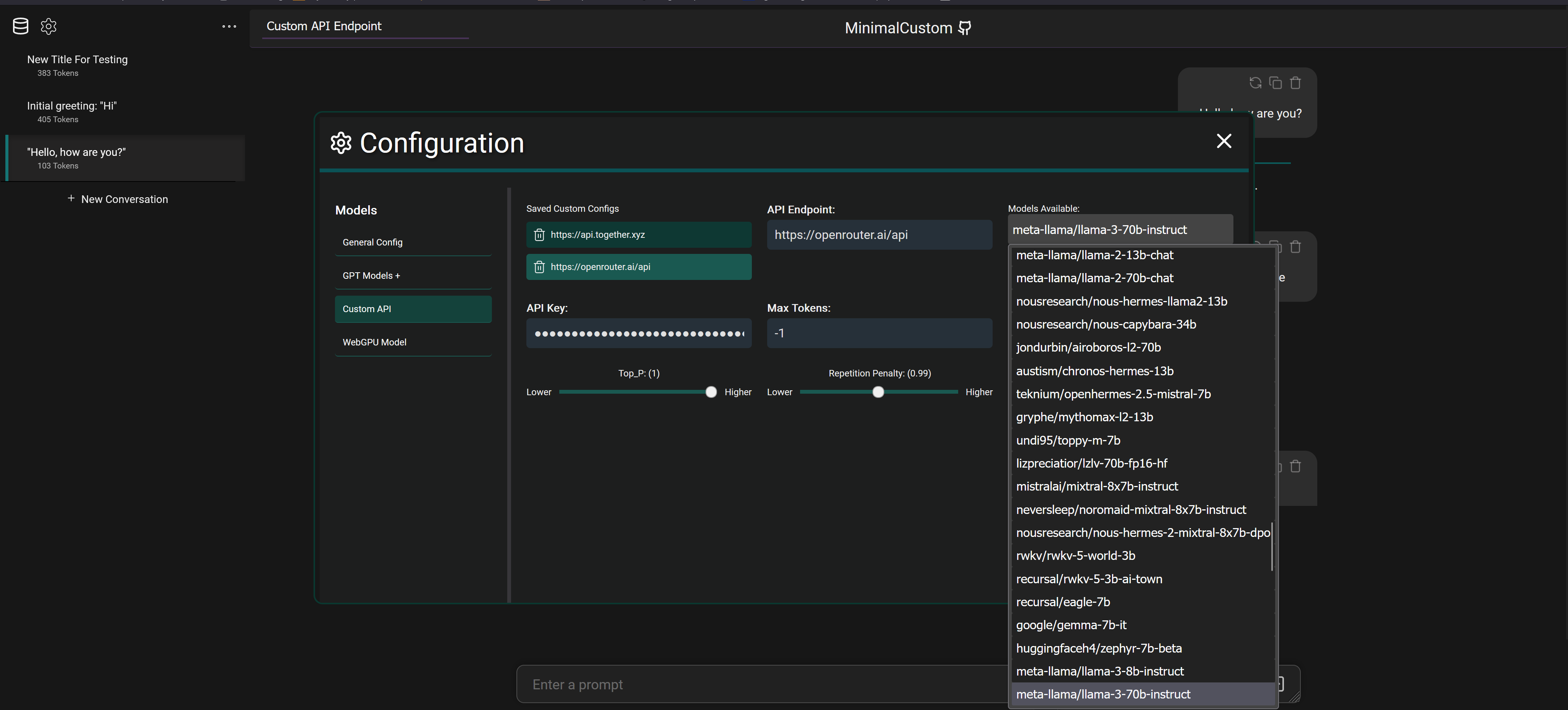

But you can also connect to services like OpenRouter with an API key and select between 20+ different models that they provide access to as seen below

It also supports connecting to fully local models via programs like LM Studio which downloads models from Hugging Face to your machine and will spin up a local API to connect and chat with the model.

3·2 years ago

3·2 years agoI use the F-GT Lite as my main rig and have no real complaints. It’s everything I would want from a midrange foldable rig.

I do want to add a bass shaker or two and I think it’ll be a perfectly reasonable cockpit for 95% of people.

Edit - I of course would always welcome as solid of a wheel mounting system as possible. The Lite has some wiggle but it can be compensated for with few tweaks.

2·2 years ago

2·2 years agoInteresting, thanks for the info!

I wasn’t aware of the update process being used as an attack vector (if it’s still a thing) gonna have to read up more on that.

41·2 years ago

41·2 years agoI used Apple for the last few years until recently and I can’t say I’ve ever really noticed stuff like apps faking being another app. That’s not to say it doesn’t happen of course.

I do know the Apple app approval process is definitely more strict than what is required for the Play Store.

I’m not very experienced with Apple or Android development so I’d be curious to hear from devs that use both platforms as well.

31·2 years ago

31·2 years agoWow they really didn’t try at all to be even somewhat secure.

Well you’ll probably really enjoy This Video haha!

The MGU-H mode was on the “motor” so it doesn’t count for this challenge but still that’s the absolute most I can get out of the car!

I’m right on the edge of my ability through a lot of this lap, proper sweaty attempt.

I’ll run in the correct mode for an official lap if I end up needing to, no worries there. At most the advantage was a few tenths as ERS deployment per lap is limited.

Moar people need to join up and give it a go!

Yessir, there’s a bit more left on the table but I’m pretty happy with a ~29.8!

At least until someone beats me hah

Hah, funnily enough Alonso is my all time favorite driver and I have admired his driving style for many years.

-

Yeah Degna 1 is flat if you just close your eyes and huck it in!

-

I don’t think I’ve ever taken Spoon curve in any game or car and thought I did it right, it always feels so wrong to me. I think that attempt was the closest I’ve been to feeling I got it right.

The 1:29’s are clearly within grasp for both of us, I feel I left a few tenths on the table at the hairpin alone with the lockup and horrible line.

-

Very nice lap! It definitely looks like you tend to be a driver with really smooth and clean inputs, I tend to wrestle things a bit as least in qualifying I’ve noticed in comparison. I’m always fascinated by driving style differences between drivers while also being relatively equal in speed.

I just happened to have a set a new lap myself, just to give you a bit of a headache 😆

Sounds good to me!

You know…I may have ran in hotlap mode as well just out of quali habit.

I’ll have to run again in the default mode this weekend and post that time just to be sure. I don’t want any advantage haha

Good thing you pointed that out lol

Edit - Though I would argue for hotlaps it would make sense to be able to use whatever default onboard controls the vehicle has imo.

Thanks! I honestly had no idea what a decent lap was with this car/track hah.

It’s definitely a track you have to be really precise at (in this car especially) otherwise you go slightly off line and run just wide enough in places to dip a wheel in the grass and around you go.

Really really fun though, especially when you get it just right!

Looking forward to seeing if I can improve a bit more this coming weekend. I’m sure you’ll catch up to me soon enough lmao.

It’s not much but I’ll kick us off!

Laptime Video Comments 1:30.515 N/A N/A 1:30.072 Video So close to the 29’s! A couple of obvious mistakes sadly. 1:29.866 Video A bit more on the edge now! Still a few clear mistakes made, leaning jussttt a tad too hard on the brakes at a few points causing some minor lockups. Track and Car both feel really good!

Edit - Bonus full send lap (MGU-H in motor mode) for fun!

I disliked signal app wise, and Matrix app was a buggy mess for me and the 4 other people who tried to use it as well

SimpleX was easy to setup and has been for the most part stable for all of us.

Basically to answer your question, people like different things.

SimpleX isn’t perfect by any means but it seems to be developed at a somewhat decent pace with noticeable improvements being made.

171·2 years ago

171·2 years agoAs a dev it’s nice to check all the official guideline boxes, as a user I’d much rather actually have features.

Agreed, I’m all for some variability in what sim and cars we use!

This project is entirely web based using Vue 3, it doesn’t use langchain and I haven’t looked into it before honestly but I do see they offer a JS library I could utilize. I’ll definitely be looking into that!

As a result there is no LLM function calling currently and apps like LM Studio don’t support function calling when hosting models locally from what I remember. It’s definitely on my list to add the ability to retrieve outside data like searching the web and generating a response with the results etc…