- cross-posted to:

- technology@lemmy.ml

- cross-posted to:

- technology@lemmy.ml

Developers get code questions wrong 63% of the time, soooo

One of the main reasons was how detailed ChatGPT’s answers are. In many cases, participants did not mind the length if they are getting useful information from lengthy and detailed answers. Also, positive sentiments and politeness of the answers were the other two reasons.

Man, this answer is long, detailed, polite… it’s great!

Sure, but it’s wrong. It’s just complete bullshit.

Yeah, sure… still…

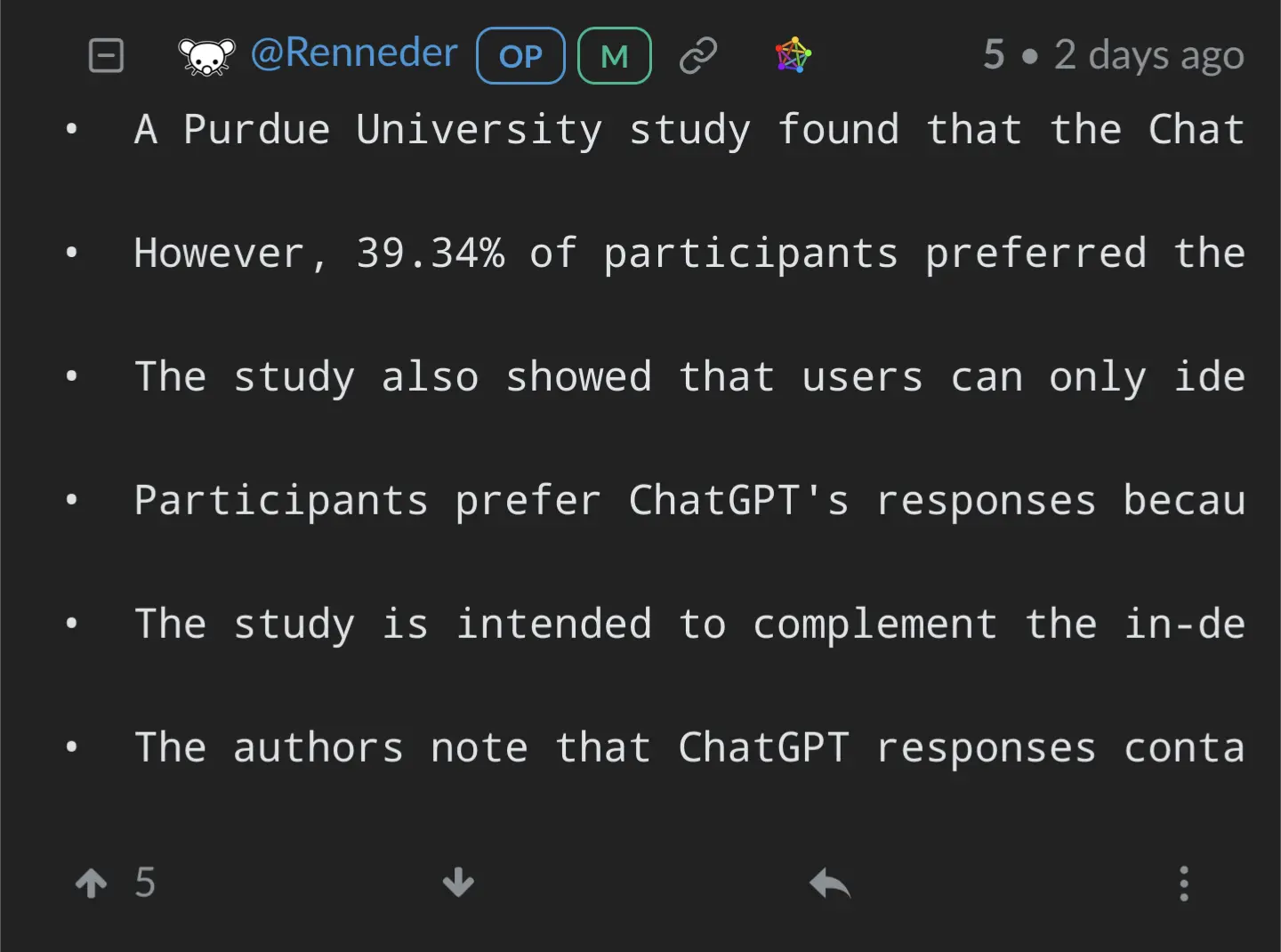

• A Purdue University study found that the ChatGPT OpenAI bot gave incorrect answers to programming questions half the time. • However, 39.34% of participants preferred the ChatGPT responses due to their completeness and well-formulated language style. • The study also showed that users can only identify errors in ChatGPT responses when they are obvious. • Participants prefer ChatGPT's responses because of its polite language, articulated textbook-style responses, and comprehensiveness. • The study is intended to complement the in-depth guidance and linguistic analysis of ChatGPT responses. • The authors note that ChatGPT responses contain more "driving attributes" but do not describe risks as often as Stack Overflow posts.When you put 4 spaces at the start of each line, it renders as a code block. Are you doing that on purpose? I don’t see why you would want your comments to use a monospace font.

In addition, in Sync for Lemmy, it wraps words in the middle of them which makes it odd to read. Its even worse in a web browser, causing the user to need to scroll to read each line.

Screenshot:

glorified google search fails at answering novel or hard problems that haven’t been answered before or answered badly.

52% is an understatement

Title feels misleading, it gets stack overflow questions wrong 52% of the time

However it got 77% of easy Leetcode questions correct. Also I believe that’s first try, which is not generally how chatgpt should be used.

Also also, you should probably be using a coding specific model if you want good coding results

Every leetcode question has been answered a billion times and you train it on those billions of answers, it should get those right.

Probably because the model has seen thousands of possible solutions to those exact Leetcode problems. Actual questions people ask on StackOverflow tend to be much more specialized.

But it confidently explains the wrong answers.

I just hope politicians don’t find out how to use it. It’ll be our doom.